. They can be configured to handle specific request methods, such as POST or GET and offer flexibility to adjust various aspects, like returning custom headers, setting status codes and more.

**Authentication:** You can now configure authentication directly on endpoints, eliminating the need to handle auth logic in your implementation scripts. This makes securing your endpoints simpler and more declarative.

**Copy to cURL:** Each endpoint includes a "Copy to cURL" button, allowing you to quickly copy the endpoint as a cURL command for testing or sharing.

You can implement these endpoints using Bash or Lua scripts, with RapidForge automatically injecting useful environment variables into your scripts.

## Pages

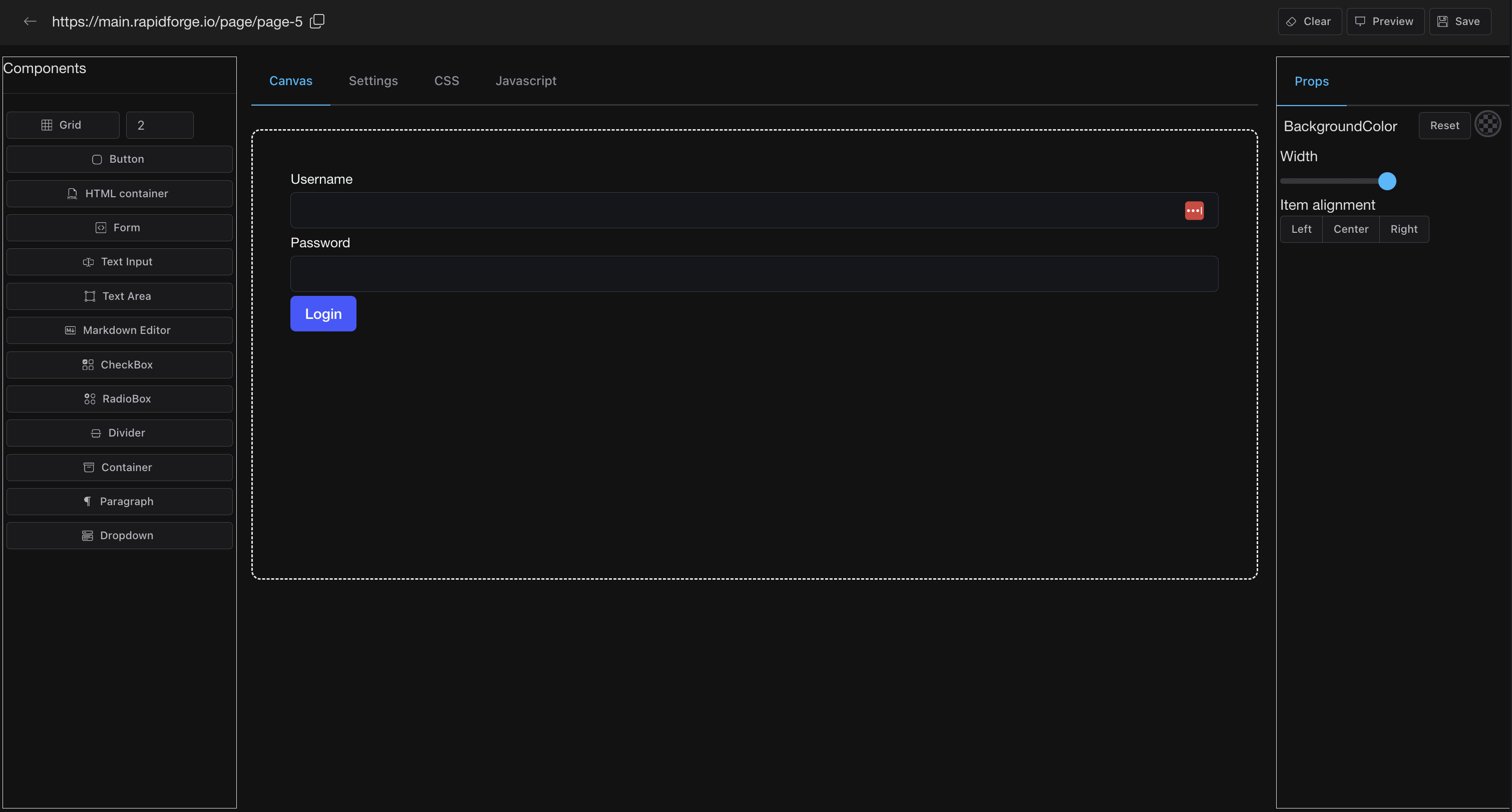

RapidForge features a drag and drop editor for easily creating web pages. Build forms and other content, then customize them with your own JavaScript and CSS. You can connect forms to API endpoints and automatically send form data to your scripts for processing.

**Common use cases:**

- **Contact forms** - Create a contact form that sends submissions to your Slack channel or email

- **Feedback surveys** - Build feedback forms that store responses in your database

- **Admin dashboards** - Create internal tools to trigger scripts or view system status

The drag and drop editor lets you build these pages visually, while the ability to add custom CSS and JavaScript gives you full control over styling and interactivity.

## Periodic Tasks (Cronjobs)

Periodic tasks are scheduled actions configured with cron syntax to run at specific times or on designated days. Audit records are available to verify proper execution.

**Common use cases:**

- **Health checks** - Monitor your services every 5 minutes and send alerts if they're down

- **Data backups** - Schedule nightly database backups to cloud storage

- **Report generation** - Generate and email weekly reports every Monday at 9 AM

- **Cleanup jobs** - Delete old logs or temporary files daily at midnight

- **API sync** - Fetch data from external APIs hourly and update your systems

## Email (Coming Soon)

Set up periodic email checks and trigger workflows based on specific email events. For example, send Slack alerts to your security team if an email contains malicious content.

# Credentials

RapidForge supports two credential types.

**Text credentials** these are simple text credentials that can be used in scripts. They will be injected to your scripts as environment variables. You can reach out to them using `CRED_{CREDENTIAL_NAME}` format.

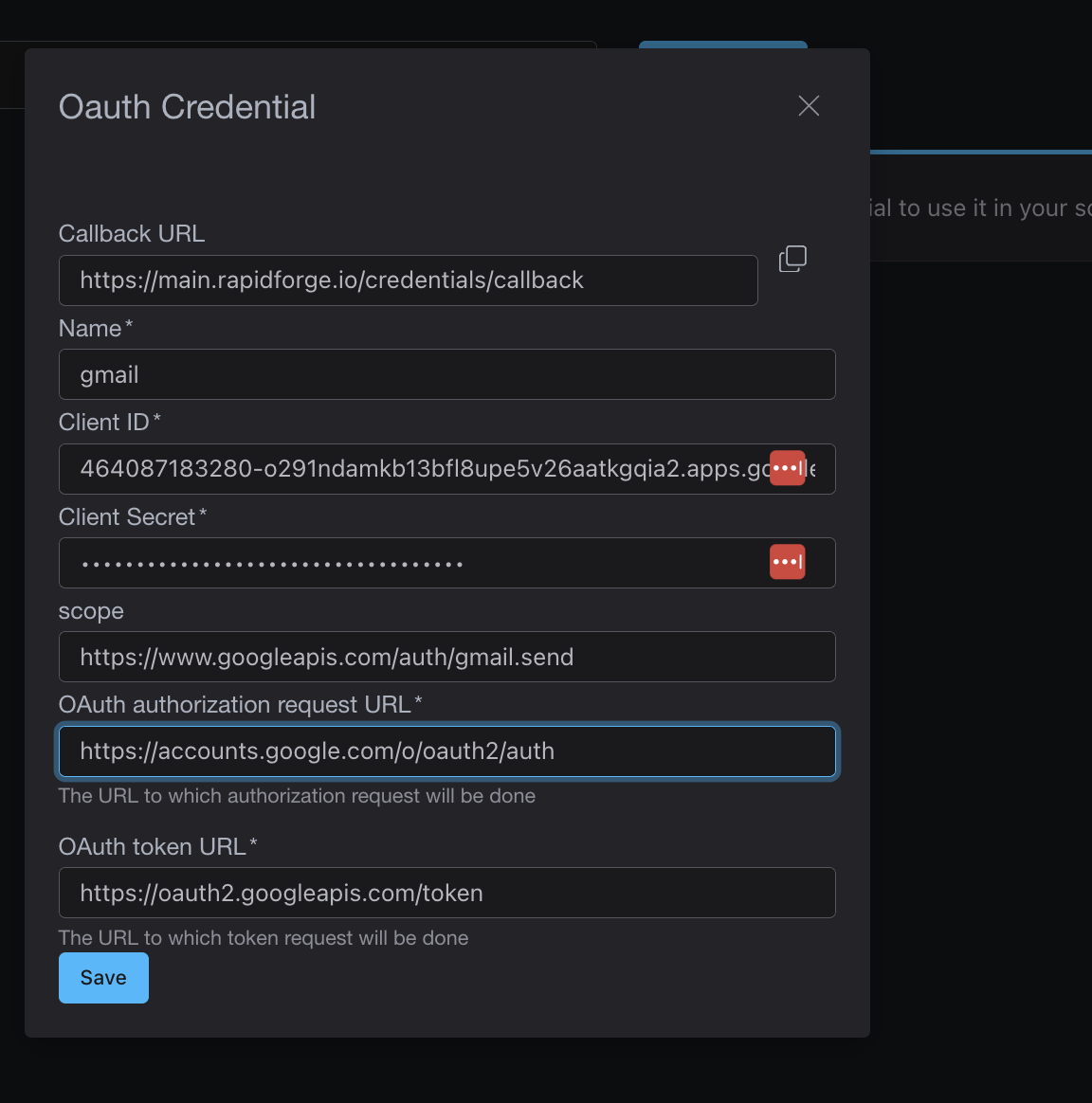

**Oauth credentials** these are credentials that you can save by creating Oauth flow with 3rd party services. RapidForge will provide you a callback address and few other parameters for users to configure the Oauth flow. Once flow is configured, access token is saved and if refresh token is available access token will be updated periodically. You can use the access token in scripts just like text credentials.

## Tines Overview

[Tines](https://www.tines.com/) is a low-code automation platform primarily targeting security and IT teams. It's designed for SOAR (Security Orchestration, Automation, and Response) workflows like incident response, threat intelligence and security operations. Tines offers a visual story builder with drag-and-drop actions, built-in data manipulation through spreadsheet-like formulas and collaboration features like shared notes and multiplayer mode for team based workflow development. It provides a free community edition on cloud with limited features and paid enterprise plans with on-premise deployment options.

## RapidForge Overview

RapidForge is an open source platform (Apache 2.0 license) designed for building applications and automating workflows across various industries, rather than focusing solely on security automation. It allows automation using Bash or [Lua](https://www.lua.org/) scripts that integrate directly into the platform. RapidForge features a drag-and-drop editor for designing web pages and internal applications. It's a completely free, self-hosted platform that deploys as a single binary with zero dependencies.

## Key Differences

| Feature | RapidForge | Tines |

|---------|-----------|-------|

| **Primary Focus** | General internal tools & automation | Security automation (SOAR) |

| **Pricing** | Open source & free (Apache 2.0) | Free community tier; enterprise pricing |

| **Deployment** | Single binary, self-hosted | Cloud or on-premise (enterprise) |

| **Automation Style** | Bash/Lua scripts | Visual story builder with actions |

| **Dependencies** | Zero dependencies | Cloud infrastructure or complex on-prem setup |

| **UI Building** | Drag-and-drop page editor | Workflow pages and forms |

| **Data Manipulation** | Via scripts | Built-in formulas (spreadsheet-like) |

| **Collaboration** | Self-hosted teams | Multiplayer mode, shared notes |

| **Air-gap Support** | Native support | Enterprise feature |

| **Target Industry** | Cross-industry | Security & IT teams |

## When to Choose RapidForge

- You need automation beyond just security workflows

- You want a completely free, self-hosted solution

- You prefer scripting (Bash/Lua) over visual builders

- You need lightweight deployment with zero dependencies

- You're working in air-gapped environments

- You want to build web interfaces alongside automations

## When to Choose Tines

- Your primary focus is security automation (SOAR)

- Your team prefers visual, no-code workflow building

- You want spreadsheet-like formulas for data manipulation

- You need real-time collaboration features (multiplayer mode)

- You prefer a managed cloud solution for security workflows

## Conclusion

Tines excels as a specialized SOAR platform for security and IT teams with its visual story builder and security-focused templates. RapidForge offers a more general purpose platform for building internal tools and automations across any industry with the advantage of being completely free and simple to deploy. Choose Tines for dedicated security automation; choose RapidForge for versatile internal tool development with scripting flexibility.

## Windmill Overview

[Windmill](https://www.windmill.dev/) is a comprehensive, enterprise-grade workflow engine and developer platform backed by Y Combinator. It supports scripting in 20+ languages including Python, TypeScript, Go, PHP, Rust, Bash, C#, and SQL. Windmill offers a full-featured WebIDE, flow orchestrator and app builder with low-code capabilities. It's designed for large-scale deployments with features like worker groups, Git-based collaboration, SOC 2 Type II compliance, RBAC, SSO, and extensive integrations with databases and cloud platforms.

## RapidForge Overview

RapidForge is an open source platform (Apache 2.0 license) that takes a minimalist approach as a single binary web server with zero external dependencies. It focuses on simplicity by supporting Bash and Lua scripts, making it ideal for teams that want to quickly turn scripts into webhooks, cron jobs, and web pages without framework overhead. RapidForge is self-hosted and particularly suited for air-gapped environments.

## Key Differences

| Feature | RapidForge | Windmill |

|---------|-----------|----------|

| **Deployment** | Single binary, zero dependencies | Docker/Kubernetes, requires PostgreSQL |

| **Languages** | Bash, Lua | Python, TypeScript, Go, PHP, Rust, Bash, C#, SQL (20+) |

| **Setup Time** | Minutes (one command) | Longer setup with more configuration |

| **Pricing** | Open source & free (Apache 2.0) | Free tier + paid enterprise plans |

| **Target Users** | Small teams, DevOps, sysadmins | Enterprise teams, data engineers |

| **Complexity** | Lightweight, minimal | Feature-rich, comprehensive |

| **Air-gap Support** | Native support | Enterprise feature |

| **Visual Flow Builder** | Basic UI | Advanced low-code flow orchestrator |

| **App Builder** | Drag-and-drop page editor | Full low-code app builder |

## When to Choose RapidForge

- You need a lightweight solution that deploys in one command

- Your automation tasks primarily involve Bash or Lua scripts

- You're working in air-gapped or restricted environments

- You prefer simplicity over extensive features

- You want a completely free, self-hosted solution without enterprise tiers

- You need to quickly expose existing CLI tools as webhooks or scheduled tasks

## When to Choose Windmill

- You need support for multiple programming languages (Python, TypeScript, Go, etc.)

- You require enterprise features like RBAC, SSO, and SOC 2 compliance

- You need extensive third-party integrations (100+ APIs)

- You have a large team requiring Git-based collaboration workflows

- You need advanced monitoring and observability features

## Conclusion

RapidForge excels at simplicity and rapid deployment turning scripts into production ready endpoints with minimal setup. Windmill offers a more comprehensive platform for enterprise scale operations with extensive language support and collaboration features. Choose RapidForge when you value simplicity and speed; choose Windmill when you need a full featured workflow engine with enterprise capabilities.

# How RapidForge is alternative to n8n

[n8n](https://n8n.io/) and RapidForge both enable workflow automation, but they approach the problem from different angles and serve different primary use cases.

## n8n Overview

n8n is a popular open source workflow automation tool with a visual node-based editor. It specializes in connecting various services through 400+ pre-built integrations (nodes) including Slack, Google Sheets, Airtable and many SaaS applications. n8n offers both cloud-hosted and self-hosted options, with the self-hosted version requiring Docker and a database setup.

## RapidForge Overview

RapidForge is an open source development platform (Apache 2.0 license) that goes beyond workflow automation. It provides a complete solution for building custom applications, web interfaces, webhooks and scheduled tasks—all packaged in a single, self-hosted binary with zero external dependencies. RapidForge uses Bash and Lua scripts for business logic rather than visual node connections.

## Key Differences

| Feature | RapidForge | n8n |

|---------|-----------|-----|

| **Approach** | Script-based (Bash/Lua) | Visual node-based editor |

| **Deployment** | Single binary, zero dependencies | Docker + database required |

| **Pre-built Integrations** | CLI tools, any API via scripts | 400+ native nodes |

| **Pricing** | Open source & free (Apache 2.0) | Free self-hosted; cloud plans from $20/month |

| **UI Building** | Drag-and-drop page editor | Limited (workflow forms) |

| **Webhook Support** | Native, instant setup | Native support |

| **Scheduling** | Built-in cron jobs | Built-in scheduling |

| **Air-gap Support** | Native support | Possible but complex |

| **Learning Curve** | Scripting knowledge | Visual, low-code |

## When to Choose RapidForge

- You need to build web interfaces alongside automations

- You prefer writing scripts over visual workflow builders

- You need a lightweight, dependency-free deployment

- You're working in air-gapped or isolated environments

- You want to expose existing CLI tools as APIs

- You need a completely free solution

## When to Choose n8n

- You need quick integrations with popular SaaS tools

- Your team prefers visual, drag-and-drop workflow building

- You want pre-built connectors for services like Slack, Notion, or Airtable

## Conclusion

n8n excels at connecting SaaS applications through its extensive library of pre-built integrations and visual workflow editor. RapidForge provides a broader platform for building complete internal tools including web UIs, APIs, and automations using familiar scripting languages.

# How RapidForge is alternative to Retool

[Retool](https://retool.com/) and RapidForge are both platforms designed to streamline the development of internal tools, yet they cater to different user needs and technical preferences.

## Retool Overview

Retool is a popular low-code platform for building internal tools, dashboards and admin panels. It offers a drag-and-drop interface with pre-built components that connect to databases (PostgreSQL, MySQL, MongoDB) and APIs. Retool uses JavaScript for customization and provides features like role-based access control, audit logs and SSO for enterprise users. It's cloud-hosted by default with self-hosted options available for enterprise plans.

## RapidForge Overview

RapidForge is an open source, self-hosted platform (Apache 2.0 license) that supports scripting in Bash and Lua, making it accessible to users familiar with these languages. It deploys as a single binary with zero dependencies, allowing for quick deployment in minutes. RapidForge focuses on turning scripts into webhooks, cron jobs, and web pages with a straightforward approach.

## Key Differences

| Feature | RapidForge | Retool |

|---------|-----------|--------|

| **Pricing** | Open source & free (Apache 2.0) | Free tier limited; paid plans start at $10/user/month |

| **Deployment** | Single binary, self-hosted | Cloud-hosted; self-hosted for enterprise |

| **Languages** | Bash, Lua | JavaScript, SQL |

| **Setup Time** | Minutes (one command) | Longer setup with configuration |

| **Dependencies** | Zero dependencies | Requires database connections |

| **UI Builder** | Drag-and-drop page editor | Extensive component library |

| **Target Users** | DevOps, sysadmins, small teams | Business apps, data teams |

| **Learning Curve** | Low (familiar scripting) | Moderate (JavaScript required) |

| **Air-gap Support** | Native support | Enterprise feature only |

## When to Choose RapidForge

- You need a completely free solution with no per-user pricing

- You prefer working with Bash or Lua scripts

- You need to deploy quickly without complex setup

- You're working in air-gapped or restricted environments

- You want a lightweight, dependency-free solution

- Your use case involves exposing CLI tools as web endpoints

## When to Choose Retool

- You need a rich library of pre-built UI components

- Your team is comfortable with JavaScript

- You're building complex data-heavy dashboards

- You need extensive database connectors out of the box

- You prefer a managed cloud solution

- You require enterprise features like SAML SSO and audit logs

## Conclusion

Retool excels at building polished internal applications with its extensive component library and database integrations. RapidForge offers a simpler, cost-free alternative for teams that want to turn scripts into production tools without the overhead of a complex platform. Choose RapidForge for simplicity and zero cost; choose Retool for feature-rich internal app development.

Alternatives

Where RapidForge fits among other tools.

These pages help search visitors quickly understand the differences between RapidForge and the tools they may already know.

Compare

Script-based automation with a lighter deployment model than a visual workflow builder.

Read the comparison →

Compare

A simpler self-hosted option for teams who want scripts, pages, and automations without per-user pricing.

Read the comparison →

Compare

General-purpose internal tools and automation instead of a security-only workflow platform.

Read the comparison →

Compare

A minimal single-binary path for Bash and Lua when you do not need a larger platform.

Read the comparison →

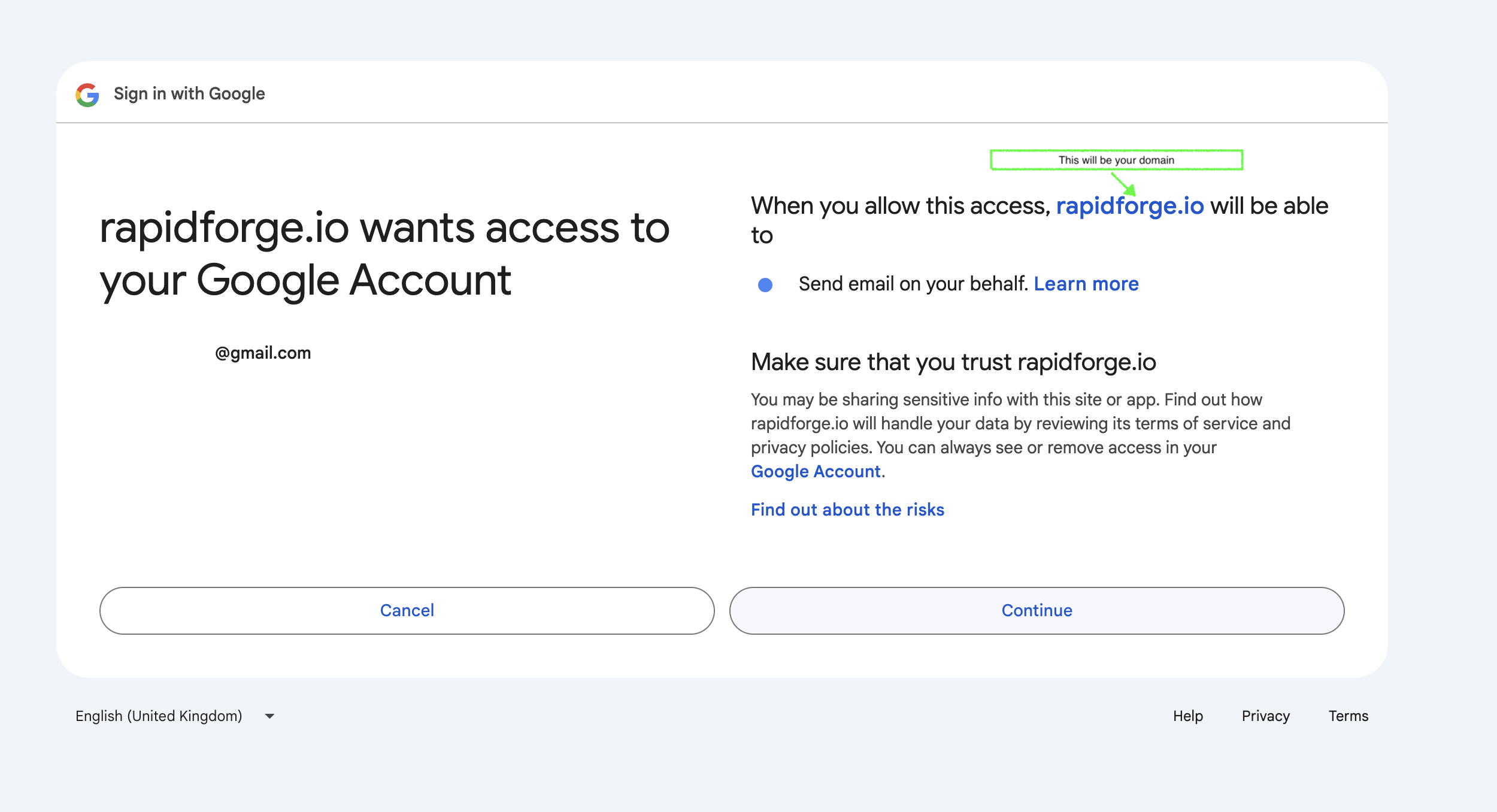

After when this is saved it will take you to Oauth flow. You should see something like this.

After when this is saved it will take you to Oauth flow. You should see something like this.

After that flow, RapidForge will save your credentials and you can use them to send emails in scripts.

## Sending Emails

Here is an simplified example of how to send emails using RapidForge and Gmail API.

```bash

# if you make a POST end point its recommended you do some

# kind of authentication using headers you can access headers via

# HEADER_

After that flow, RapidForge will save your credentials and you can use them to send emails in scripts.

## Sending Emails

Here is an simplified example of how to send emails using RapidForge and Gmail API.

```bash

# if you make a POST end point its recommended you do some

# kind of authentication using headers you can access headers via

# HEADER_ Create POST endpoint called `/webhook/login` inside of the block and add the following script. Don't forget to set form action to this endpoint.

```bash

# Create JSON payload

payload=$(cat <

Create POST endpoint called `/webhook/login` inside of the block and add the following script. Don't forget to set form action to this endpoint.

```bash

# Create JSON payload

payload=$(cat <